This year, one topic that's dominated the conversation in both tech and business spheres is AI. The excitement kicked off when OpenAI launched chatGPT. Suddenly, this tech wasn't reserved for specialists but for everyone. Facebook and Google followed suit, announcing their own Large Language Models (LLMs).

We've been watching this evolution unfold for the past six months, and it's been nothing short of remarkable. It almost feels like these LLMs are becoming more powerful and versatile every day. This rapid progress has sparked talk of 'Skynet' and 'Armageddon'. As humans, we tend to project today's reality onto tomorrow. So, we naturally expect the same pace to continue when we see the advancements made in the last six months.

I was discussing this with Daniel Priestley last week, and he shared a fascinating insight. At MIT's Imagination in Action conference, Sam Altman suggested that LLMs might have already reached their optimal size and may not grow much more, “I think there’s been way too much focus on parameter count. Yes, it may still increase. But this reminds me a lot of the gigahertz race in chips in the 1990s and 2000s, where everyone was focused on the highest number.”

The progress in AI that we've been witnessing isn't a slow and steady journey; it's more like a sudden leap. We're currently experiencing a boom in possibilities thanks to the arrival of LLMs, while their capabilities will continue to grow. It's likely, the computer chip power surge of two decades ago, that this growth rate will eventually stabilise. Skynet might not be as inevitable as we thought.

This aligns well with an article I wrote in May, where I proposed that we'd likely see the development of smaller, specialist LLMs tailored to specific tasks, rather than continuously growing generalist ones.

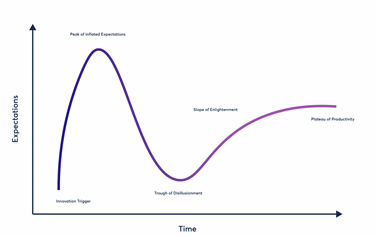

Altman's mention of the race to increase computer chip size is a good analogy. If you've heard one of my talks in the past few years, you'll likely have heard me talk about the Gartner Hype Cycle. With each new technology, an initial surge of excitement eventually gives way to disappointment as reality sets in. Over time - often years - the technology capabilities continue to develop until it quietly achieves the potential that everyone was excited about initially. By then, the technology is typically more user-friendly and accessible, and as people start to recognise its potential in everyday life, excitement re-emerges, and the tech becomes an overnight success.

Take computers, for example. Most people associate the advent of computers with the 1980s, while tech enthusiasts might point to the 1970s with the invention of the microprocessor. But the first computer, Charles Babbage's 'difference engine' was created in 1822. This mechanical device, capable of storing data in hard copies, even had the help of the first computer programmer, Ada Lovelace.

These early, unwieldy machines continued to evolve with innovations like punch cards, vacuum tubes, and printouts but were not easy to use. The first computer game appeared on a 6” cathode ray tube in the 1940s, and the first microprocessor arrived in 1971. It wasn't until 1981 when Xerox introduced the graphical user interface along with the mouse, that computers really began to hit the mainstream. Steve Jobs saw the potential of this technology and incorporated it into Apple computers.

With a user interface and a mouse, computers became accessible to people without a programming background. Bill Gates's vision of a computer in every home and office started to look not only feasible but likely and thus began the computer revolution.

Now, let's look at mobile phones. The first mobile phone patent dates back to 1903, with the first mobile phone service launching in 1926 and the first commercial mobile phone appearing in 1973. In 1996, only 16% of UK households owned a phone. A decade later, that figure had skyrocketed to 80%, thanks in part to innovations by Nokia, BlackBerry, and of course, Apple. The iPhone's debut marked the dawn of the smartphone era, and today it's rare to find anyone over 13 without one.

The pattern was similar to the internet. I was engaging with people across the globe on bulletin boards as early as 1986, but it wasn't until the advent of the web browser in the late nineties that the internet became truly mainstream.

In each case, we see an initial burst of excitement about the innovation, followed by disappointment as reality sets in, before the technology matures and becomes more accessible. Once it reaches a stage where the majority can use it, its potential is fully unleashed.

Three years ago, I made a video about the birth of LLMs, focusing specifically on GPT-3 and how I believed they would impact our work. But it was only six months ago OpenAI made this technology accessible by overlaying a chat interface on top of the GPT models. That's when the AI explosion really took off. However, it's crucial to remember that this burst of potential is a step up, not a continuing trajectory. I expect things to slow down within the next 12 months as we gradually improve our ability to interact with and use LLMs on a daily basis.

Just like the internet didn't continue to increase connections indefinitely but became gradually faster and more available, we'll see a similar trend with AI. Nowadays, no one worries about whether they have a 100 MB or 1 GB connection – it's just there when needed. The same goes for computers and smartphones; the tech has been improving, but the past few years have seen relatively little change.

Every tech revolution follows the same pattern: arrival, disappointment, evolution, boom, and finally, a levelling out. We're in the boom phase of AI now, but eventually, there will be a levelling out. The way we interact with AI, using natural language to ask computers to carry out tasks, will simply become the usual way we do things. A useful, exciting and fun normal, but still normal.